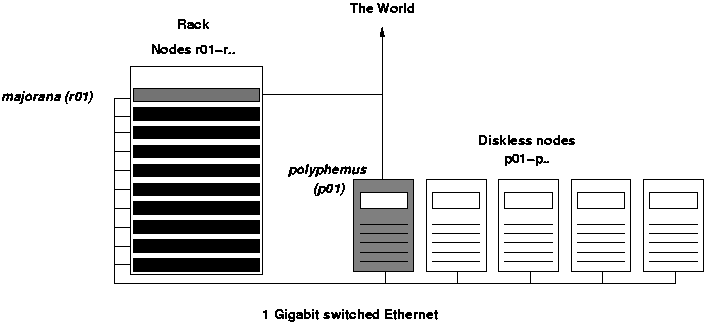

IRIDIA cluster architecture

Introduction

The cluster was built in 2002 and has been extended and modified since. Currently it consists of two servers and a number of nodes with disks and a number with out disks. The disk-less nodes are the normal PC looking boxes on the shelves in the server room, while the nodes with disks are the ones in the rack.

Currently, servers as well as nodes run a 32-bit Debian GNU/Linux, however the nodes in the rack are dual Opterons so that might change at some point in the future.

majorana is the main server and provides the following services:

- NTP

- NIS

- Sun Grid Engine

- Vortex License

- DHCP for the computer on the shelves

- TFTP for diskless booting

Normally, users will log on to marjoana and submit jobs using the Sun Grid Engine.

Physical setup

The boot process

The task of maintenance of the cluster is much easier if one knows the boot sequences of the clients. In fact, if the sequences are known, it is easier to find the source of errors and which files should be modified.

There are two kind of clients in the cluster, disk-less (the shelf-cluster) and with-disk (rack cluster). The boot sequence is obviously different for both, and therefore are explained separately.

Here is, step by step, what happens when a disk-less computer boots. majorana is in charge of handling this process. All configuration files are on it.

- Step 1

- The clients is switched on. Its ethernet card sends a DHCP request in broadcast on the network. The program in the card's firmware that deals with this is called PXE (PXE is actually one of the standards that can be used to boot, and is developed mainly by Intel. 3com cards, for instance, require different procedures that the one described here in order to receive a kernel to boot.)

The BIOS of the client must be configured in order to enable PXE. Moreover, the card should be set as first booting device.

- Step 2

- majorana receives the requests and start a DHCP dialog with the client. During the dialog, the server tells the client its IP address, its name, the default gateways, name and time servers. Most importantly, it tells to which computer the client should address to receive the kernel (majorana again), which is the file to request (pxelinux.0) and where to find the root image to mount (on majorana).

On majorana, /etc/dhcp.conf or /etc/dhcp3/dhcp.conf. pxelinux.0 is part of the syslinux package.

- Step 3

- The client start a TFTP (Trivial FTP) connection to the server it was told.

- Step 4

- The server receives the TFTP request, starts a TFTP server, and sends pxelinux.0 in /tftpboot/ to the client. The TFTP repository /tftpboot is specified as command line to the TFTP server and is specified in /etc/inetd.conf.

The Debian way to modify this file is by using update-inetd.

- Step 5

- The client receives and executes pxelinux.0, which IS NOT the kernel! It is just a boot loader, like LILO or GRUB, with the difference that it works via network. The client asks the server, via TFTP, the boot configuration file (something like GRUB's menu.lst or LILO's lilo.conf). The file is exepcted to be in a subdirectory called pxelinux.cfg. pxlinux.0 then tries several different filenames, till one of them is found on the server and retrieved. The first one is equal to the IP address of the client converted in hexadecimal (if the IP is 192.168.100.2, then the file name is C0A86402). If it is not found, it continues by taking away the last letter of the name, till a matching name is found (C0A8640, C0A864, etc.). When also the last one fails, it tries with the name default, which actually is the only one present on the server.

/tftpboot/pxelinux.cfg/default

- Step 6

- The clients receives and reads theconfiguration file, which specifies the name of the kernel to download and its parameters. The client then execute the last TFTP tranfer to download the kernel.

The name specified in the configuration file must be the name of a file in /tftboot.

- Step 7

- The PXE loads the kernel into memory and executes it. During the boot the kernel re-start the DHCP dialogue to step 1. Then it mounts the NFS directory specified during the dialogue, called nfsroot, on /.

The nfsroot is on majorana, in /var/lib/diskless/simple/root/. The list of exported directories is in /etc/exports.

After each change to this file, the NFS server should be restarted with /etc/init.d/nfs-kernel-server restart.

- Step 8

- The nfsroot contains those files and applications common to all disk-less client. However, each client needs to have some specific and reserved areas for its programs. One example is the /var directory, which contains, among the others, the log files. It is important, in order to fix any problem, that each host has its own log, and therefore the /var directories should be separated. The same applies for /dev, /etc, /tmp. The private directories are on majorana. During the boot, the client mounts its own private directories from the server (The server uses the package diskless, which decided for this division and structure, to manage and maintain all the directories.)

/var/lib/diskless/simple/<CLIENT_IP>/dev, /var/lib/diskless/simple/<CLIENT_IP>/etc, /var/lib/diskless/simple/<CLIENT_IP>/tmp and /var/lib/diskless/simple/<CLIENT_IP>/var.

Redundancy and replication

Notice that none of the things mentioned below has actually been installed - this is merely a wish list and ideas

Two servers provide two access points to the cluster. The services required by the nodes are splitted on the servers in order to reduce the workload and to improve robustness to failures. Here there is a description of which services can be duplicated and what needs to be done in case of crashes.

- The NIS protocol already includes the presence of more that one server, of which only one is the master server. The others are slave servers that are a copy of the master and that work only when the master is unreachable.

- NTP is used to keep the clocks of the cluster syncronized. The clients can access only one server (to check!), although there might be more in the network. A failure in the NTP server is not considered critical, because it will take days before the clocks of the clients differ unreasonably. Therefore, only one server is enough

- The Vortex License server cannot be copied, and it is already configured so that it can run only on polyphemus. If polyphemus crashes, it is still possible to start it on majorana by changing the MAC address of the latter to 00:0C:6E:02:41:C3 (polyphemus's MAC address). majorana can copy the file needed to run the server on a daily basis.

- Sun Grid Engine (SGE) can be run only on one computer (polyphemus). All the nodes access its data via NFS. majorana can copy SGE directory daily, but if polyphemus crashes, all the nodes must be instructed to mount the new directory on majorana.

- /home directories. There can be only one NFS server in the network. majorana was chosen because it react faster: it has 2 CPUs, and when one is busy writing, the other can still process other incoming requests. Both majorana and polyphemus use RAID architecture to prevent data loss. The only problem is if majorana is not reachable any more. In this case, each process on the nodes that tries to access \texttt{/home} will be stopped till majorana comes up again.

- The root directories of the diskless nodes are on polyphemus. If polyphemus is not reachable, these nodes will be blocked waiting for polyphemus to come up again. majorana could keep a backup the these directories, but if the administrator wants to mount the backup directories on majorana, the nodes must be manually rebooted because they are note reachable via SSH. The DHCP configuration must also be changed to give the new mount point of the backup directories to the nodes.

- In this solutions, two different DHCP servers deals with the two groups because of the different way of maintaining and updating the nodes. Anyway, only one server could do the same job. In case of failure of one server, the other can restart the DHCP server with a new configuration to deal with the whole cluster.